- | 4:00 pm

This AI can produce stunning images with just a few words of description, but is it art?

What does it mean to make art when an algorithm automates so much of the creative process itself?

A picture may be worth a thousand words, but thanks to an artificial intelligence program called DALL-E 2, you can have a professional-looking image with far fewer.

DALL-E 2 is a new neural network algorithm that creates a picture from a short phrase or sentence that you provide. The program, which was announced by the artificial intelligence research laboratory OpenAI in April 2022, hasn’t been released to the public. But a small and growing number of people–myself included–have been given access to experiment with it.

As a researcher studying the nexus of technology and art, I was keen to see how well the program worked. After hours of experimentation, it’s clear that DALL-E–while not without shortcomings–is leaps and bounds ahead of existing image generation technology. It raises immediate questions about how these technologies will change how art is made and consumed. It also raises questions about what it means to be creative when DALL-E 2 seems to automate so much of the creative process itself.

A STAGGERING RANGE OF STYLE AND SUBJECTS

OpenAI researchers built DALL-E 2 from an enormous collection of images with captions. They gathered some of the images online and licensed others.

Using DALL-E 2 looks a lot like searching for an image on the web: you type in a short phrase into a text box, and it gives back six images.

But instead of being culled from the web, the program creates six brand-new images, each of which reflect some version of the entered phrase. (Until recently, the program produced 10 images per prompt.) For example, when some friends and I gave DALL-E 2 the text prompt “cats in devo hats,” it produced 10 images that came in different styles.

Nearly all of them could plausibly pass for professional photographs or drawings. While the algorithm did not quite grasp “Devo hat”–the strange helmets worn by the New Wave band Devo–the headgear in the images it produced came close.

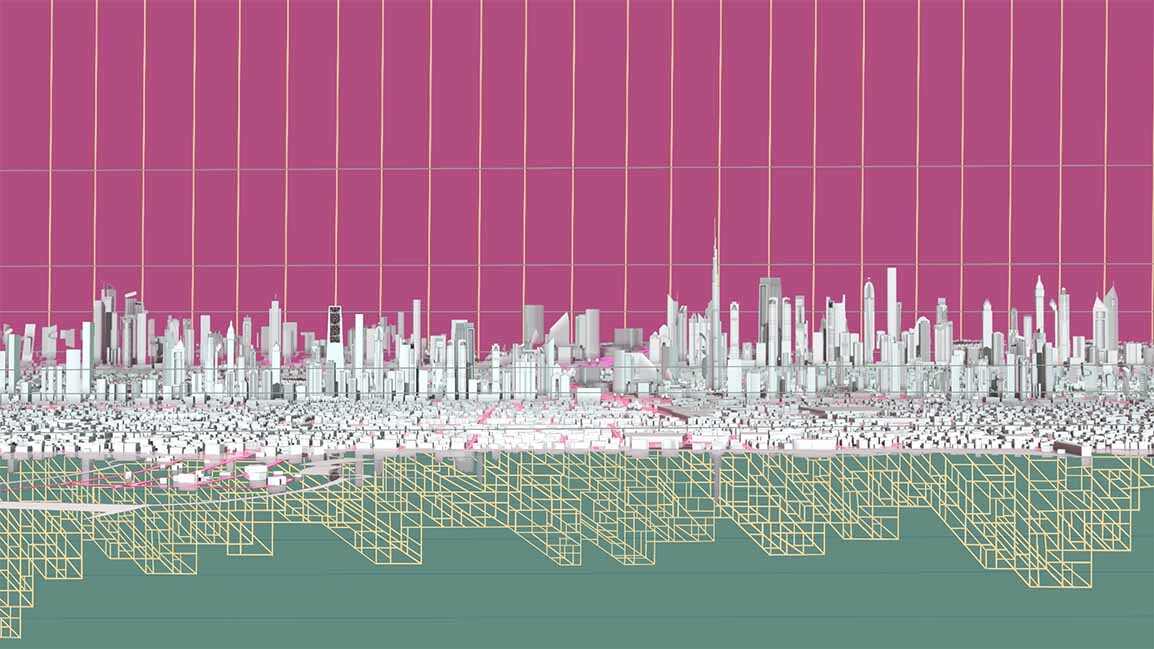

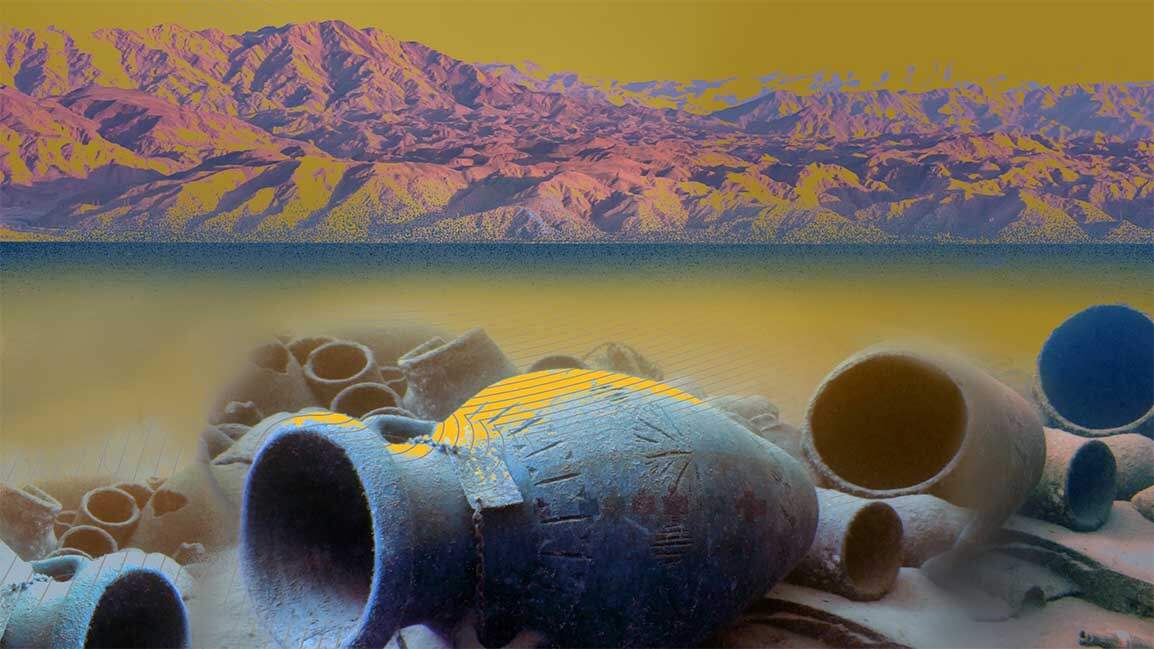

Over the past few years, a small community of artists have been using neural network algorithms to produce art. Many of these artworks have distinctive qualities that almost look like real images, but with odd distortions of space – a sort of cyberpunk Cubism. The most recent text-to-image systems often produce dreamy, fantastical imagery that can be delightful but rarely looks real.

DALL-E 2 offers a significant leap in the quality and realism of the images. It can also mimic specific styles with remarkable accuracy. If you want images that look like actual photographs, it’ll produce six life-like images. If you want prehistoric cave paintings of Shrek, it’ll generate six pictures of Shrek as if they’d been drawn by a prehistoric artist.

It’s staggering that an algorithm can do this. Each set of images takes less than a minute to generate. Not all of the images will look pleasing to the eye, nor do they necessarily reflect what you had in mind. But, even with the need to sift through many outputs or try different text prompts, there’s no other existing way to pump out so many great results so quickly–not even by hiring an artist. And, sometimes, the unexpected results are the best.

In principle, anyone with enough resources and expertise can make a system like this. Google Research recently announced an impressive, similar text-to-image system, and one independent developer is publicly developing their own version that anyone can try right now on the web, although it’s not yet as good as DALL-E or Google’s system.

It’s easy to imagine these tools transforming the way people make images and communicate, whether via memes, greeting cards, advertising–and, yes, art.

WHERE’S THE ART IN THAT?

I had a moment early on while using DALL-E 2 to generate different kinds of paintings, in all different styles–like “Odilon Redon painting of Seattle”–when it hit me that this was better than any painting algorithm I’ve ever developed. Then I realized that it is, in a way, a better painter than I am.

In fact, no human can do what DALL-E 2 does: create such a high-quality, varied range of images in mere seconds. If someone told you that a person made all these images, of course you’d say they were creative.

But this does not make DALL-E 2 an artist. Even though it sometimes feels like magic, under the hood it is still a computer algorithm, rigidly following instructions from the algorithm’s authors at OpenAI.

If these images succeed as art, they are products of how the algorithm was designed, the images it was trained on, and–most importantly–how artists use it.

You might be inclined to say there’s little artistic merit in an image produced by a few keystrokes. But in my view, this line of thinking echoes the classic take that photography cannot be art because a machine did all the work. Today the human authorship and craft involved in artistic photography are recognized, and critics understand that the best photography involves much more than just pushing a button.

Even so, we often discuss works of art as if they directly came from the artist’s intent. The artist intended to show a thing, or express an emotion, and so they made this image. DALL-E 2 does seem to shortcut this process entirely: you have an idea and type it in, and you’re done.

But when I paint the old-fashioned way, I’ve found that my paintings come from the exploratory process, not just from executing my initial goals. And this is true for many artists.

Take Paul McCartney, who came up with the track “Get Back” during a jam session. He didn’t start with a plan for the song; he just started fiddling and experimenting and the band developed it from there.

Picasso described his process similarly: “I don’t know in advance what I am going to put on canvas any more than I decide beforehand what colors I am going to use . . . Each time I undertake to paint a picture I have a sensation of leaping into space.”

In my own explorations with DALL-E 2, one idea would lead to another which led to another, and eventually I’d find myself in a completely unexpected, magical new terrain, very far from where I’d started.

PROMPTING AS ART

I would argue that the art, in using a system like DALL-E 2, comes not just from the final text prompt, but in the entire creative process that led to that prompt. Different artists will follow different processes and end up with different results that reflect their own approaches, skills and obsessions.

I began to see my experiments as a set of series, each a consistent dive into a single theme, rather than a set of independent wacky images.

Ideas for these images and series came from all around, often linked by a set of stepping stones. At one point, while making images based on contemporary artists’ work, I wanted to generate an image of site-specific installation art in the style of the contemporary Japanese artist Yayoi Kusama. After trying a few unsatisfactory locations, I hit on the idea of placing it in La Mezquita, a former mosque and church in Córdoba, Spain. I sent the picture to an architect colleague, Manuel Ladron de Guevara, who is from Córdoba, and we began riffing on other architectural ideas together.

This became a series on imaginary new buildings in different architects’ styles.

So I’ve started to consider what I do with DALL-E 2 to be both a form of exploration as well as a form of art, even if it’s often amateur art like the drawings I make on my iPad.

Indeed some artists, like Ryan Murdoch, have advocated for prompt-based image-making to be recognized as art. He points to the experienced AI artist Helena Sarin as an example.

“When I look at most stuff from Midjourney“–another popular text-to-image system–”a lot of it will be interesting or fun,” Murdoch told me in an interview. “But with [Sarin’s] work, there’s a through line. It’s easy to see that she has put a lot of thought into it, and has worked at the craft, because the output is more visually appealing and interesting, and follows her style in a continuous way.”

Working with DALL-E 2, or any of the new text-to-image systems, means learning its quirks and developing strategies for avoiding common pitfalls. It’s also important to know about its potential harms, such as its reliance on stereotypes, and potential uses for disinformation. Using DALL-E 2, you’ll also discover surprising correlations, like the way everything becomes old-timey when you use an old painter, filmmaker or photographer’s style.

When I have something very specific I want to make, DALL-E 2 often can’t do it. The results would require a lot of difficult manual editing afterward. It’s when my goals are vague that the process is most delightful, offering up surprises that lead to new ideas that themselves lead to more ideas and so on.

CRAFTING NEW REALITIES

These text-to-image systems can help users imagine new possibilities as well.

Artist-activist Danielle Baskin told me that she always works “to show alternative realities by ‘real’ example: either by setting scenarios up in the physical world or doing meticulous work in Photoshop.” DALL-E 2, however, “is an amazing shortcut because it’s so good at realism. And that’s key to helping others bring possible futures to life – whether its satire, dreams or beauty.”

She has used it to imagine an alternative transportation system and plumbing that transports noodles instead of water, both of which reflect her artist-provocateur sensibility.

Similarly, artist Mario Klingemann’s architectural renderings with the tents of homeless people could be taken as a rejoinder to my architectural renderings of fancy dream homes.

It’s too early to judge the significance of this art form. I keep thinking of a phrase from the excellent book “Art in the After-Culture“–”The dominant AI aesthetic is novelty.”

Surely this would be true, to some extent, for any new technology used for art. The first films by the Lumière brothers in 1890s were novelties, not cinematic masterpieces; it amazed people to see images moving at all.

AI art software develops so quickly that there’s continual technical and artistic novelty. It seems as if, each year, there’s an opportunity to explore an exciting new technology–each more powerful than the last, and each seemingly poised to transform art and society.

This article is republished from The Conversation under a Creative Commons license. Read the original article.